BLOG

AIによるカラースキームの自動生成(English Only)

Using state-of-the-art techniques originally developed for language modeling, we have created an AI model that can generate color schemes that match human descriptions. This model can be used to tune the visual design of automatically generated content in applications ranging from marketing to human-computer interaction to the arts.

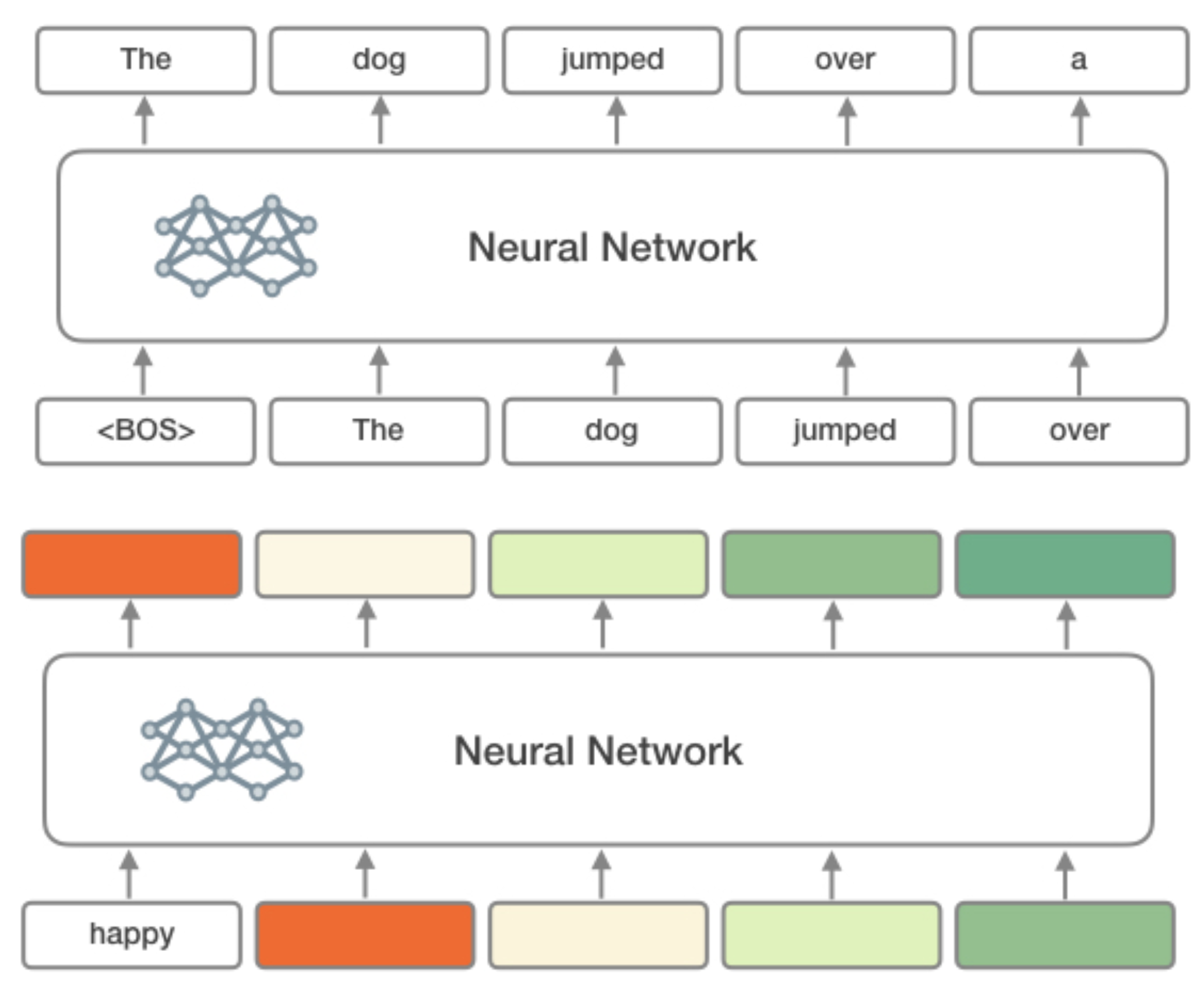

Our system is based on the architecture which recently has enabled groundbreaking advances in the fields of natural language processing. A language modeling architecture such as OpenAI’s GPT-2 system learns to predict the next word in a sentence given the context of all previously seen words.

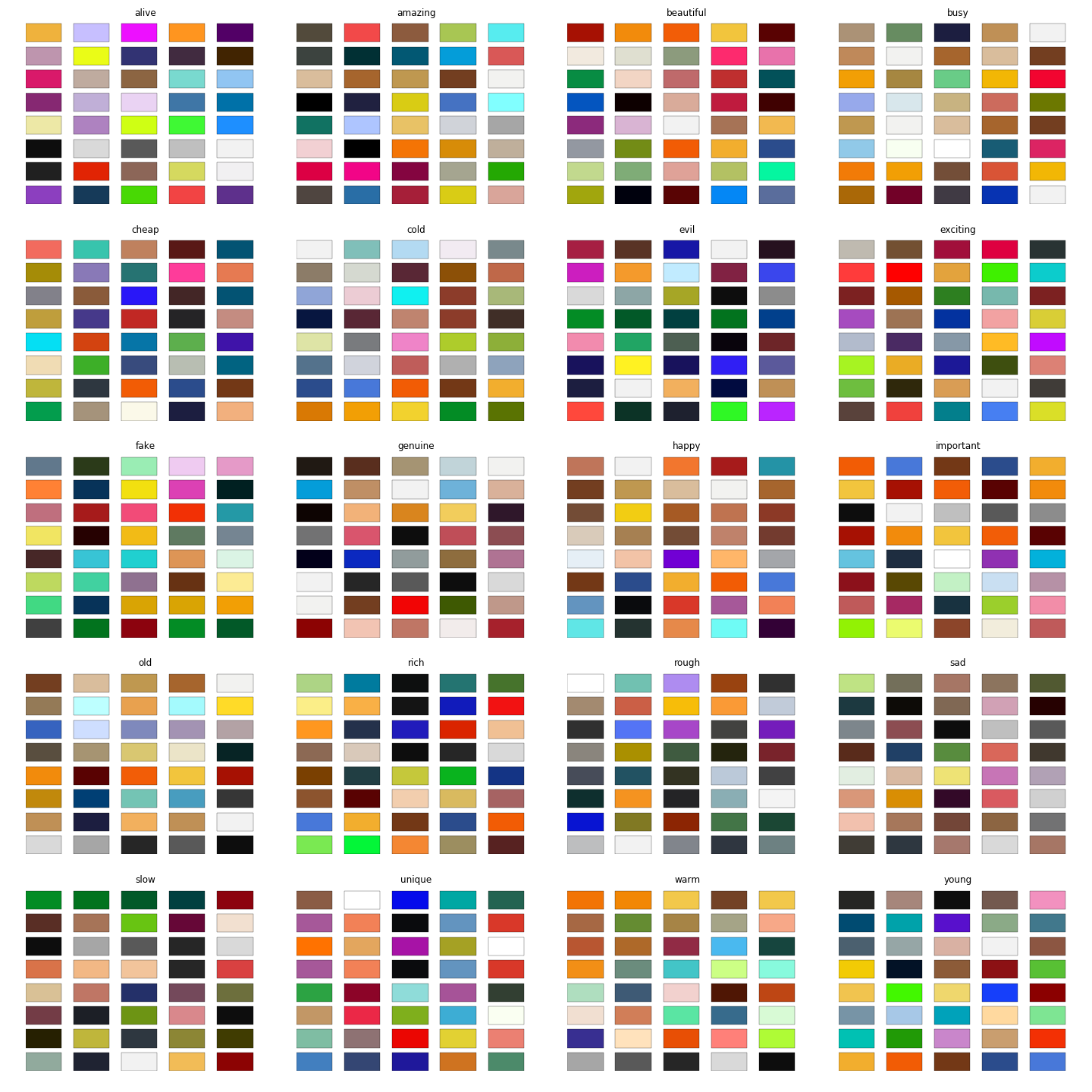

By treating a sequence of colors as a ‘sentence’, and each color’s red, green, and blue values as ‘words’, we can apply language modeling techniques to teach a model to generate novel sets of colors. To be able to control the generation, we additionally condition the generation on a context keyword describing an abstract concept such as `happy`, `old`, `energetic` and so forth.

To create a dataset to train this model we collected thousands of color schemes from design repositories on the internet as well as user-provided tags. We filtered out color schemes with repeated colors and with fewer than 5 colors. We also filtered any keywords that weren’t found in the GloVe word embedding dataset.

By bridging visual design with an understanding of language concepts associated with colors, we hope this technology will enable AI applications that can more effectively interact with humans. As an example, we used our AI model to recolor a photo of a cute shiba using a variety of concepts. We look forward to seeing where this technology will lead!